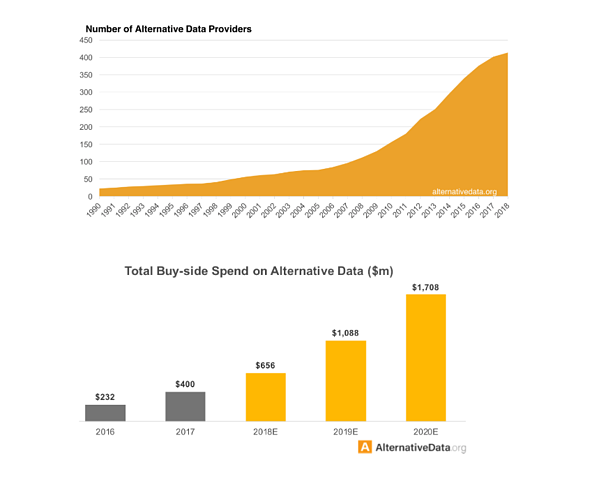

Alt-D. You can try this on your keyboard, but it won't do anything. It's just the new shortcut for hedge fund and asset managers seeking alpha. JPMorgan Chase estimated that in 2017, more than $2 billion were invested in alternative data to boost funds performance. On top of that, Deloitte indicates that the number of alternative data analysts has quadrupled in the past five years.

But these indications are only the beginning, as more asset managers predict that the impact of alternative data on the investment process will be advantageous both in the shorter and the longer term.

Rewind: What Is Alternative Data?

Financial analysts are used to working with traditionally structured datasets to support investment decisions. They are in the classic tabular format for financial reporting, displaying detailed earnings of companies or macroeconomic figures from national statistical institutions.

Alternative data is a term designating all the new types of data a desk can use to make better-informed decisions and improve their understanding of the market. They can be huge datasets, and they are often unstructured (i.e., they contain things like text and image data), which make them very appealing to data scientists eager to reshape how asset managers define their investment strategies.

The rise of Twitter about 10 years ago was the first driver of alternative data, with a large number of use cases around sentiment analysis on the stock market. Some studies proved that correctly gauging the mood on Twitter could help predict the appetite for a particular stock, especially during the earnings season. That idea - often referred to as collective intelligence for investing - was pushed one step further with the scraping of data from crowdsourcing platforms to identify hot sectors, or the next big shot.

The amount of data generated on a day-to-day basis opens a completely new dynamic for the financial market industry. Asset managers now check performance figures from a company or economic indicators with alternative sources of information.

For example, data from cargo vessels all around the globe has already been used to understand where oil was being delivered and to infer global consumption patterns. This is now enhanced (thanks to satellite imagery, watershed sensor, and IoT devices) to exploit mispriced assets in specific regions of the globe.

Some fund managers might check the reviews for a company’s best-selling product on e-commerce websites to have a better idea of what the next quarter results will look like. They can also check the data from different job boards to get a feel for the hiring strategy: if the number of job posting is going up, that could mean the company is going through a steady and healthy growth and that the money they have invested is adequately used.

This approach can be very lucrative; some players claim that the use of alternative data has dramatically improved their performance (enough to catch the interest of more conservative players - investment bankers - that now wonder if they should enter the dance).

Alternative Data Risks

Though alternative data looks very attractive on paper, it does not come without risk. For example, alternative data sources can contain some sensitive information, from CCTV footage used to monitor the traffic at a big box store on Black Friday for a glimpse of consumer appetite to credit card transactions and IoT device data bought from data brokers to understand general consumer behavior towards a brand. They all contain personal information, and their use in an investment scenario can be restricted by regulators.

Another alternative data acquisition strategy involves industrialized data scraping from the internet, which of course needs to be compliant with websites’ policies. Although regulation is still lagging, risk and asset managers understand pretty well that making investment decisions on material that is technically unusable for such a purpose could seriously harm the business.

Stepping aside from the characteristics of the data itself, there is also the cost of bad modeling. As a relatively new field, the data cannot always allow for rigorous backtesting, and the quality of the signals are often harder to assess. The reputational cost of heavy trading losses on incorrect signals coming from alternative data would have disastrous consequences, and to a certain extent, explains why some players are taking their time to adopt the methodology.

But the status quo comes with a cost, too. Bringing new techniques (like alternative data) on board too late might create a gap with the competition that is too wide to bridge later.

Talent acquisition and retention can also be problematic for those that don’t adopt alternative data techniques. Data scientists want to explore new ideas and will be attracted by the cutting-edge use cases a hedge fund will offer over the realm of traditional portfolio managers.

How to Capitalize while Mitigating Risks

Successful implementations of alternative data require organizations to leverage the technology available in-house while bringing different stakeholders around a common framework to assess the risks and validate the signals generated by these new strategies.

Portfolio managers will have to work closer than ever with auditors to confirm these Python scripts extracting an extensive amount of data from the web can be used, or with the compliance department to validate that sources bought from a data vendor can be used given the regulatory environment (e.g., the General Data Protection Regulation in Europe - GDPR - or the California Consumer Privacy Act - CCPA).

And that is only the beginning of the story.

Deloitte indicates that industry leaders have worked hard to put an integrated framework in place to promote idea sharing and generate greater efficiency in signal modelization. Alternative data is not here to replace the traditional way of investing, but to enhance it, and combining alternative data with traditional sources of financial reporting can open the door to tremendous competitive advantage. That integrated framework has to cope with the different types of technology that different stakeholders are using:

• For example, data scientists tend to favor elastic computing or GPU resources to push their alternative use cases forward, while traditional quantitative researchers might just need access to relational databases and flat files.

• On the other end, risk managers need to be able to monitor important KPIs related to model performance.

• In addition, portfolio managers need to understand the rationale behind an alternative strategy at a glance, from the source of data to the model creation. Without such a framework, deciding when to push a strategy forward based on the current market conditions is much harder and causes delays in the speed of decision making (which ultimately is critical to keeping an edge on the competition).

Then comes the question of model management. If a portfolio manager has to follow the main guiding principles of the fund (long-only, market neutral, distressed investment, etc.) they can have at their disposal hundreds of models created by quantitative analysts. And in the case of solid growth, that number is only going to go higher as they hire more of them. Therefore, any approach should allow for faster and easier management of those strategies and how they operate on the market.

Although all these stakeholders are motivated to generate more alpha for the firm, they each look at the problem from a different angle. Only a healthy collaboration around a fluid architecture will transform the business for the better.

This approach could also be a smart way to overcome talent scarcity. With the right cooperation between desks, asset managers could identify strong candidates ready to join an alternative data team and save months in trying to hire a unique profile combining industry knowledge as well as technical skills.

To sum up, a centralized framework built around technology to leverage people skills, traditional, and alternative source of data will help asset managers to accelerate their path toward enterprise AI.

Read the detailed, technical white paper on how to use machine learning (more specifically deep learning) for better option pricing, from trader-turned-data-scientist Alex Hubert.

Alexandre Hubert is lead data scientist at Dataiku.

27 t/m 29 oktober 2025Praktische driedaagse workshop met internationaal gerenommeerde trainer Lawrence Corr over het modelleren Datawarehouse / BI systemen op basis van dimensioneel modelleren. De workshop wordt ondersteund met vele oefeningen en pra...

29 en 30 oktober 2025 Deze 2-daagse cursus is ontworpen om dataprofessionals te voorzien van de kennis en praktische vaardigheden die nodig zijn om Knowledge Graphs en Large Language Models (LLM's) te integreren in hun workflows voor datamodel...

3 t/m 5 november 2025Praktische workshop met internationaal gerenommeerde spreker Alec Sharp over het modelleren met Entity-Relationship vanuit business perspectief. De workshop wordt ondersteund met praktijkvoorbeelden en duidelijke, herbruikbare ri...

11 en 12 november 2025 Organisaties hebben behoefte aan data science, selfservice BI, embedded BI, edge analytics en klantgedreven BI. Vaak is het dan ook tijd voor een nieuwe, toekomstbestendige data-architectuur. Dit tweedaagse seminar geeft antwoo...

17 t/m 19 november 2025 De DAMA DMBoK2 beschrijft 11 disciplines van Data Management, waarbij Data Governance centraal staat. De Certified Data Management Professional (CDMP) certificatie biedt een traject voor het inleidende niveau (Associate) tot...

25 en 26 november 2025 Worstelt u met de implementatie van data governance of de afstemming tussen teams? Deze baanbrekende workshop introduceert de Data Governance Sprint - een efficiënte, gestructureerde aanpak om uw initiatieven op het...

26 november 2025 Workshop met BPM-specialist Christian Gijsels over AI-Gedreven Business Analyse met ChatGPT. Kunstmatige Intelligentie, ongetwijfeld een van de meest baanbrekende technologieën tot nu toe, opent nieuwe deuren voor analisten met ...

8 t/m 10 juni 2026Praktische driedaagse workshop met internationaal gerenommeerde spreker Alec Sharp over herkennen, beschrijven en ontwerpen van business processen. De workshop wordt ondersteund met praktijkvoorbeelden en duidelijke, herbruikbare ri...

Deel dit bericht