End 2019 I had the chance to participate in the Ford Innovation Day organized by BigML partner Thirdware. The two-day event included innovation projects ranging from conversational agents to predictive maintenance systems leveraging Machine Learning.

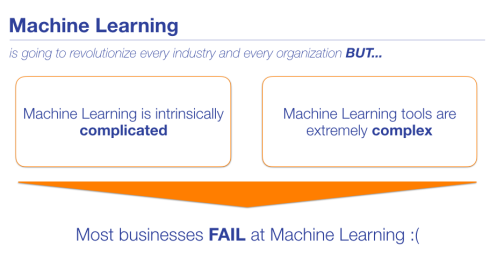

My presentation was titled the same as the title of this blog post, thus mainly concentrating on the prospects of Machine Learning for automotive companies. In some ways, the automotive industry is not that different from many other industries of the global economy as it has been struggling to find its footing when it comes to putting Machine Learning front and central at scale. That’s not necessarily just due to a lack of meaningful investment either.

Let’s take a step back and take a look at the broader trends first, McKinsey Global Institute finds in its forward-looking Vision 2030 report on the automotive industry that the next decade will bring about slow (2%) growth in the traditional vehicle sales and related aftermarket services. To boot, most of this growth in the traditional business segments will occur in emerging markets driven by demographic and macroeconomic factors. Yet the global automotive industry revenue is expected to increase by $1.5T (+30%) thanks to new business models such as shared mobility and connectivity services materializing by 2030. As a side note, shared mobility examples include car-sharing and e-hailing while data connectivity services include specialized applications such as entertainment, remote services, and subscription-based software upgrades. In fact, 10% of cars sold in 2030 are expected to be shared vehicles adding to special purpose fleets and mobility-as-a-service solutions popular in dense urban areas. Various flavors (e.g., hybrid, plug-in, battery-electric, fuel cell) of Electric Vehicles will make up to 50% of vehicles sold by that time!

To make this new landscape possible new competing ecosystems with more diverse players will need to emerge to deliver a much more integrated customer experience. However, the common denominator of this future vision seems to be highly integrated intelligent software applications giving way to data-driven insights acting as the connective tissue in between. Permissioned data becomes the new currency of collaboration, software becomes much more central to everything and it doesn’t take a genius to figure out that Machine Learning gets to play a big role to play in this scenario.

Sounds great, right? Not so fast!

Back to today’s reality, another 2018 report, this time from CapGemini, found only modest gains (quantified as an increase from 7% to 10% of surveyees year over year) of ML systems deployed at scale among automotive OEMs, suppliers, and dealers. Yet 80% of respondents mentioned Machine Learning as a strategic initiative. There’s a tremendous gap between 10% and 80% that’s worth re-emphasizing.

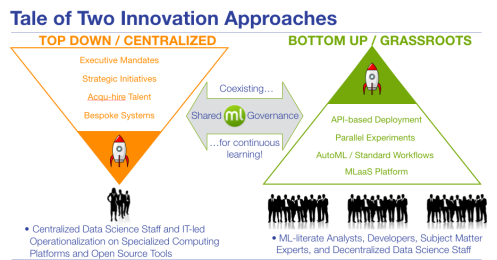

Potential use cases for automotive companies are many, touching both core operations (e.g., Predictive Maintenance, Supplier Risk Management) as well as support functions like Sales, Marketing, Finance or HR. So what explains the slow uptake? Part of this outcome has been shaped by previous expensive and failed attempts by these companies in dealing with the inherent complexity of Machine Learning. In response to this, most industry players seemed to have changed tack to apply a more measured approach in selecting use cases and projects. The surveyed companies that deployed at least three use cases at scale across their entire operations were dubbed as “Scale Champions” in the report, which frankly is a pretty low bar considering the true upside. Whatsmore, the “champs” did a markedly better job with the re-skilling of their workforces and putting in place a Machine Learning governance process.

There’s something to be said of this top-down approach defined often by executive mandates and buttressed by committees defining and prioritizing the use cases of interest, identifying risks and the rules of the road. Things then get handed down to IT teams and Data Scientists to implement and roll out to production in collaboration. It’s certainly possible to make headway through this waterfall-like modus operandi albeit at greater cost and a slower speed.

However, there’s also a newly emerging bottom-up approach that is synergistic. Thanks to a new set of easy-to-use MLaaS tools with low-code visual interfaces and built-in AutoML capabilities subject matter experts, analysts and even business folks can be upskilled faster to autonomously explore their own predictive ideas that would otherwise go unexplored altogether. In this decentralized model of embedding ML in many more business processes, standardized workflows, and RESTful APIs play a critical role in deploying the worthy predictive models with high signal to noise ratios to production systems, thus eliminating the need to rewrite them from scratch with heavy IT involvement. As a bonus, the fact that a working ML governance framework from the previous waterfall projects exists serves to make this agile approach even more effective in managing related organizational risks.

This new way of thinking seems to be gathering steam with more thought leaders in the industry who are already singing the praises of an elevated level of accessibility. Take for instance Andrew Moore, who proclaimed:

“After years of hype around mysterious neural networks and the Ph.D. researchers who design them, we’re entering an age in which just about anyone can leverage the power of intelligent algorithms to solve the problems that matter to them. Ironically, although breakthroughs get the headlines, it’s accessibility that really changes the world. That’s why, after such an eventful decade, a lack of hype around machine learning may be the most exciting development yet.”

We wholeheartedly agree!

Atakan Cetinsoy is Vice President Predictive Applications at BigML.

27 t/m 29 oktober 2025Praktische driedaagse workshop met internationaal gerenommeerde trainer Lawrence Corr over het modelleren Datawarehouse / BI systemen op basis van dimensioneel modelleren. De workshop wordt ondersteund met vele oefeningen en pra...

29 en 30 oktober 2025 Deze 2-daagse cursus is ontworpen om dataprofessionals te voorzien van de kennis en praktische vaardigheden die nodig zijn om Knowledge Graphs en Large Language Models (LLM's) te integreren in hun workflows voor datamodel...

3 t/m 5 november 2025Praktische workshop met internationaal gerenommeerde spreker Alec Sharp over het modelleren met Entity-Relationship vanuit business perspectief. De workshop wordt ondersteund met praktijkvoorbeelden en duidelijke, herbruikbare ri...

11 en 12 november 2025 Organisaties hebben behoefte aan data science, selfservice BI, embedded BI, edge analytics en klantgedreven BI. Vaak is het dan ook tijd voor een nieuwe, toekomstbestendige data-architectuur. Dit tweedaagse seminar geeft antwoo...

17 t/m 19 november 2025 De DAMA DMBoK2 beschrijft 11 disciplines van Data Management, waarbij Data Governance centraal staat. De Certified Data Management Professional (CDMP) certificatie biedt een traject voor het inleidende niveau (Associate) tot...

25 en 26 november 2025 Worstelt u met de implementatie van data governance of de afstemming tussen teams? Deze baanbrekende workshop introduceert de Data Governance Sprint - een efficiënte, gestructureerde aanpak om uw initiatieven op het...

26 november 2025 Workshop met BPM-specialist Christian Gijsels over AI-Gedreven Business Analyse met ChatGPT. Kunstmatige Intelligentie, ongetwijfeld een van de meest baanbrekende technologieën tot nu toe, opent nieuwe deuren voor analisten met ...

8 t/m 10 juni 2026Praktische driedaagse workshop met internationaal gerenommeerde spreker Alec Sharp over herkennen, beschrijven en ontwerpen van business processen. De workshop wordt ondersteund met praktijkvoorbeelden en duidelijke, herbruikbare ri...

Deel dit bericht